Safety‑critical Interface

eHMI for AV

system design

product design

user research

Overview

A research-driven exploration of how autonomous vehicles can “speak” through movement amplified by light and color so pedestrians know when it is actually safe to cross.

Tools

Pen & paper, Miro, Cinema 4D, After Effects, MAXQDA, SoSci Survey

Duration

6 months

Outcomes at a glance

Increased pedestrians’ confidence to cross when the eHMI prototype confirmed yielding behavior compared to conventional vehicle behavior without any external interface.

Demonstrated that reinforcing vehicle movement beats symbolic instructions for communicating intent non‑verbally in ambiguous crossing situations.

Derived a semantic framework and concrete design criteria (e.g., “red to block, green to release”) for future external HMIs in safety‑critical contexts.

Showed how prototyping-as-research can de‑risk eHMI concepts before investing in expensive technical development or on‑road trials.

Identified patterns that transfer beyond automotive into any system where behavior, not screens, carries meaning.

Challenge

Autonomous vehicles remove the human driver as a social actor in traffic, taking with them eye contact, gestures, and subtle cues pedestrians and cyclists currently rely on to decide whether to cross.

While sensors can detect vulnerable road users, the vehicle’s intent remains invisible from the outside, especially at crossings, intersections, and shared spaces where hesitation is dangerous.

The real risk is not “Can the car see me?” but “What will it do next and can I trust it?”

Solution

I designed a conceptual external human–machine interface that uses light, motion, and established color semantics to amplify what the vehicle is already doing instead of explaining system state.

The eHMI clarifies yielding, blocking, and neutral behavior by visually reinforcing movement, reducing ambiguity at crossings and shared spaces without adding cognitive load.

Outcomes & impact

Participants consistently reported higher confidence to cross when the eHMI prototype confirmed yielding behavior compared to identical scenarios without an external interface.

Visual reinforcement of movement made vehicle intent feel clearer and more predictable, reducing hesitation even when pure vehicle behavior would technically have been “safe enough.”

Non‑verbal cues that aligned with existing semantics (red to block, green to release, neutral cyan) were interpreted more consistently than concepts relying on text, symbols, or projections.

The project resulted in a set of design criteria that can be reused by automotive teams to avoid over‑expressive, decorative, or language‑dependent eHMI concepts.

The work demonstrated how research‑through‑design and role play can safely test intent communication before any on‑road deployment.

Broader transfer

The eHMI framework for mapping signals to meaning and action is applicable to other safety‑critical systems where human trust depends on how people anticipate what a system will do next (e.g. robots in warehouses, micromobility, or public infrastructure).

By understanding what existing elements (e.g., signals, color, shape, layout, and motion) already mean to people, I can amplify them instead of piling on novel, more complex elements.

Discover

How do people already read vehicles?

Purpose

Turn an abstract “AVs need to communicate” brief into a specific understanding of how pedestrians and cyclists already interpret vehicle behavior today and why that breaks when the human driver disappears.

Approach

Conducted an extensive literature review on human perception in traffic, non‑verbal communication, and movement as a carrier of intent.

Reviewed human‑factors research on safety in mixed traffic to understand existing risk models around vulnerable road users (VRU).

Performed a taxonomy analysis of ~70 existing eHMI concepts across academia, industry prototypes, markting concepts, and speculative design.

Clustered concepts by modality (light, text, projection), semantic strategy (instruction vs indication), and complexity to surface patterns.

Key insights

Body language

Movement is already language pedestrians continuously read speed, deceleration, and trajectory as intentional behavior.

Intent over explanations

VRUs need intent (“Will this car yield?”), not explanations (“The system has detected you and is in XYZ mode”).

Clarity

Additional layers of text, icons, and expressive animation often increase ambiguity, cognitive load, or distraction instead of clarifying intent.

Signal over show

Many existing concepts optimize for novelty or expressiveness rather than semantic clarity in real‑world conditions.

Co‑Design

Making mental models visible

Purpose

Avoid biasing people with speculative AV/eHMI explanations and instead surface how vulnerable road users already assign meaning to traffic signals and vehicle behavior.

Approach

Mapped my own assumptions up front (which signals VRUs notice, where attention goes on a vehicle, which public codes influence behavior) to make biases explicit.

Recruited a diverse group of vulnerable road users: pedestrians, cyclists, car‑oriented participants, multimodal commuters, and tech‑driven mobility users.

Facilitated a co‑design workshop as an observational setting, staying in the background while participants thought aloud and debated.

Used semantic differentials (safe–unsafe, ambiguous–unambiguous) on traffic visuals to identify which signals carry strong, shared meaning.

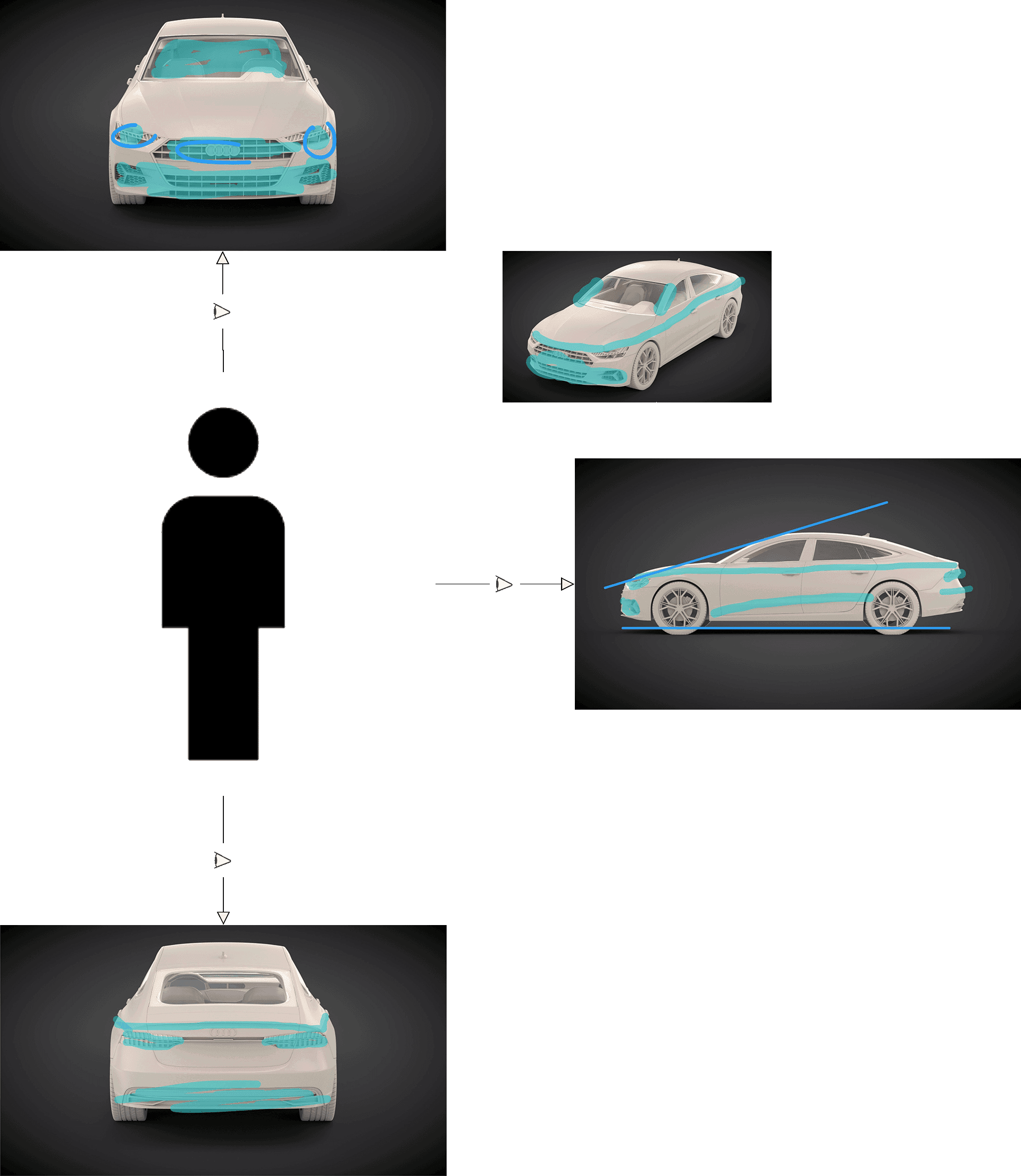

Ran “role switching” exercises where participants imagined themselves as the vehicle communicating with pedestrians, and salience mapping to locate attention hotspots on a vehicle.

Assumption map

Anna’s pains (fragmented info, hidden fees, mistrust) and gains (clarity, safety, shared experiences) linked to a value promise.

Salience mapping

Result of participants marking which vehicle areas they look at first, revealing consistent focal points and ‘critical zones’ from a VRU perspective.

Key insights

Shared codes

Across very different VRU types, participants converged on the same basic codes (e.g., red as blocking, green as releasing), indicating robust shared semantics to build on.

Behavior first

In role‑switching and discussions, participants kept going back to stopping behavior and positioning; additional projections or “talking cars” were often described as distracting or “too much.”

Reinforced behavior

When sketching or describing ideas, participants instinctively suggested signals that reinforced stopping, waiting, or passing, rather than entirely new channels, hinting that amplification of behavior would be easier to interpret.

Evidence to narrow

The workshop confirmed and refined my earlier assumptions, giving me a more concrete, evidence‑based foundation to narrow the solution space.

Define

From signals to a semantic framework

Purpose

Converge from scattered insights to a coherent, defensible design direction: what exactly should an eHMI communicate, how does meaning form, and where are the boundaries?

Approach

Synthesized research and co‑design findings into a semantic problem statement: the challenge is interpretation and trust, not sensor capability.

Drew on communication theory and Krippendorff’s notion of shared understanding to model how signals become meaning and afford action.

Created semantic diagrams that explicitly followed the vulnerable road user’s perspective: vehicle action → perception → meaning → decision to cross or wait.

Translated these diagrams into a set of guiding principles and constraints for any eHMI concept I would prototype.

Problem statement

Vulnerable road users do not need explanations of what an autonomous vehicle is doing, but they need the vehicle’s behavior to be reinforced in a way that makes their existing perception unambiguous so they can decide safely when to cross.

Form follows meaning

Meaning guides action

the way we perceive an object invokes meaning, which affords certain actions.

A chair with four legs invokes stability and affords the action of sitting.

Interaction Model

To design a physical interface, I translated the psychological model into an interaction model:

each signal acts as a trigger that invokes meaning and affords an immediate, safe action for the pedestrian.

"Humans do not respond to the physical properties of things,

but to what they mean to them."

Klaus Krippendorff

Interaction Architecture

Instead of a traditional information architecture focussing on screens or navigation, this framework helped to model how:

a vehicle action is perceived

perception translates into meaning

meaning enables or inhibits human action

Linear Interaction Flow

Reasoning about affordances

This abstraction made it possible to reason about which signals are expected, which meanings they convey, and which actions they afford.

Design Direction

Intent over information

Communicate “I will stop for you” instead of “The system has detected you and is braking.”

Movement as body language

Treat vehicle motion as the primary communication channel and amplify it visually instead of competing with it.

Non‑verbal only

Avoid text, icons, and spoken language to reduce cognitive load and cultural dependency.

Reinforcement over instruction

Visual cues should confirm and clarify behavior already in motion, not issue new “orders” to pedestrians.

These principles deliberately constrained the solution space and acted as a semantic guardrail whenever a “cool” idea clashed with real‑world interpretability.

Develop

Prototyping as a research tool

Purpose

Make the semantic principles tangible and testable without pretending to design a production‑ready system; treat the prototype as a structured question to participants.

Approach

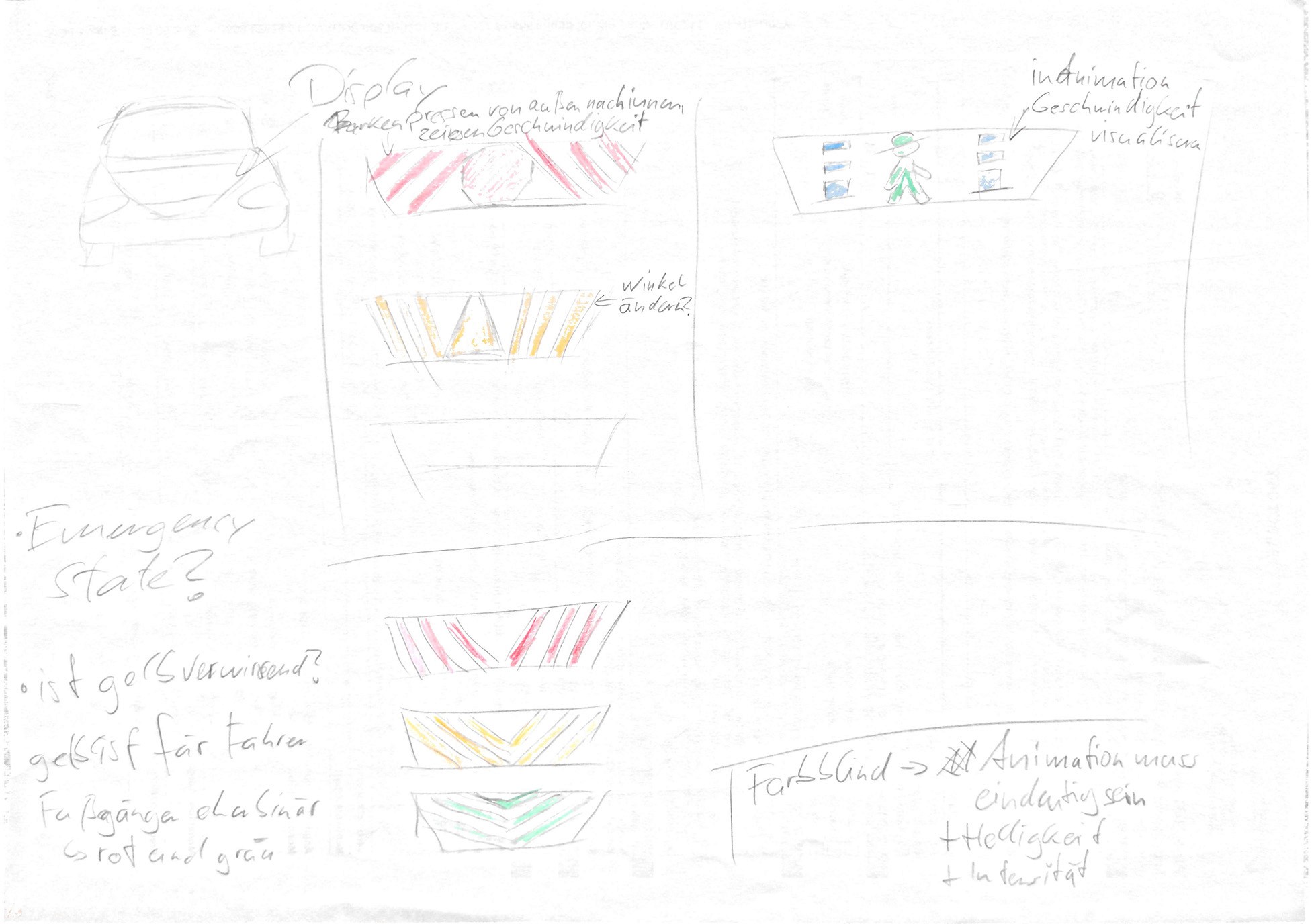

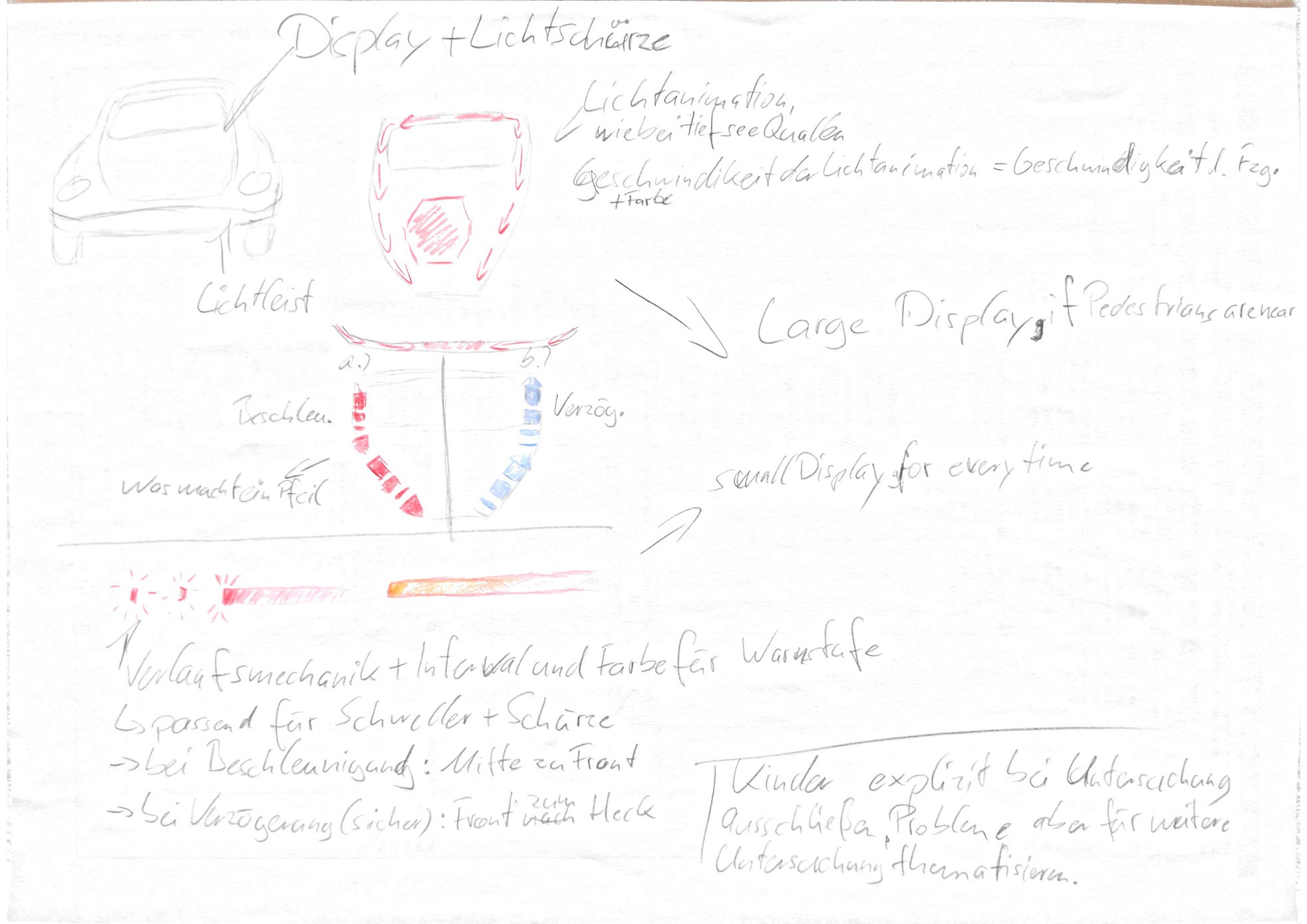

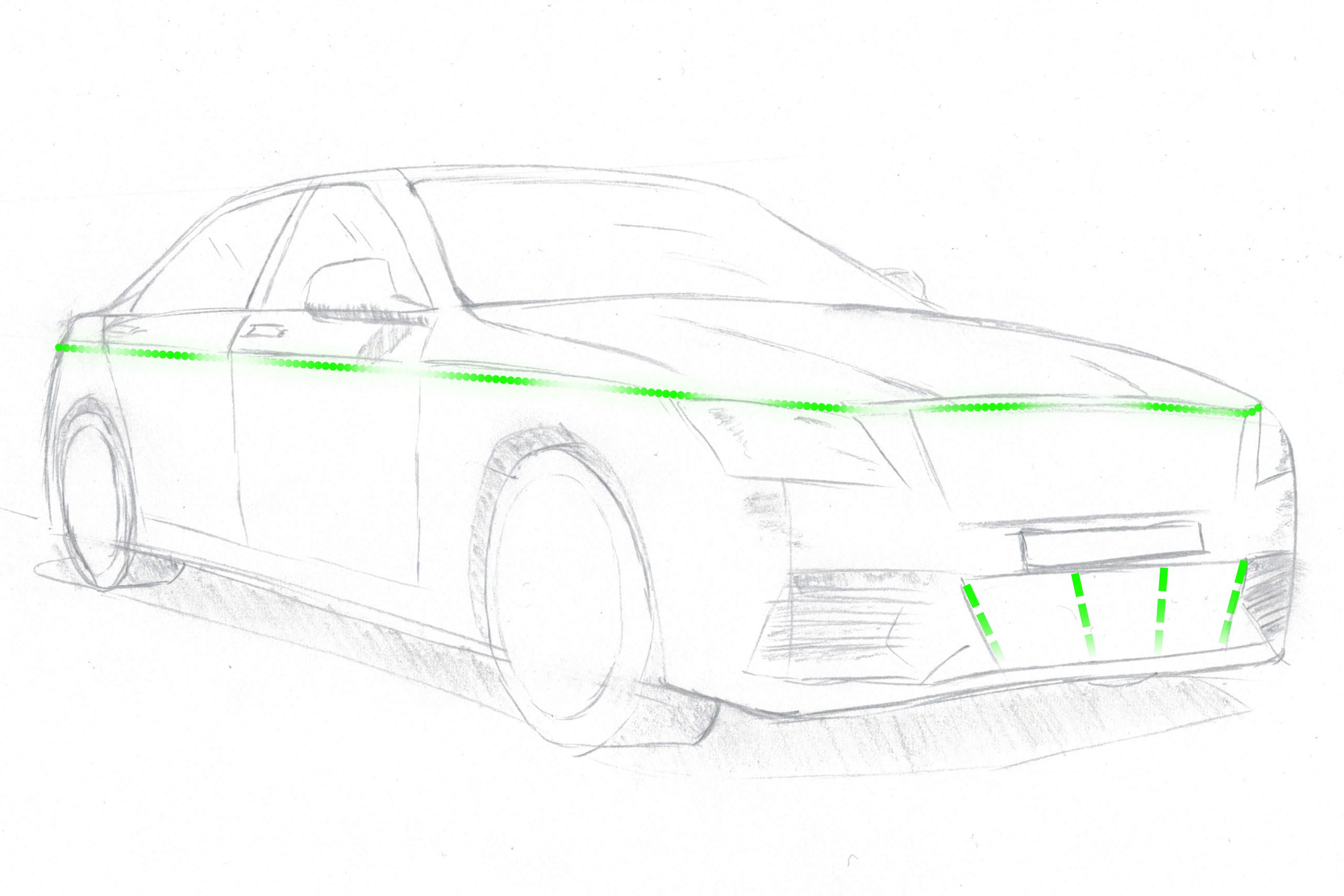

Started with divergent sketching to explore different configurations of light placement, motion patterns, and color use across the vehicle body.

Evaluated concepts against the established principles: does this amplify movement, or distract from it? Does it add unnecessary symbolism?

Consolidated a coherent signaling system into a virtual prototype, modeled in 3D and animated to show approach, yielding, blocking, and neutral driving.

Chose light as the primary modality for visibility and controllability, and positioned it along salient contours and front zones VRUs already watch.

Positioned the lights in areas that manufacturers already accentuate, making this placement both perceptually and brand‑aligned.

Synchronized light motion and timing tightly with vehicle deceleration and stopping to visually link cause and effect.

Sketched exploration

Sketch to 3D Prototype

Results

Minimal semantic system

The prototype embodied a minimal but consistent semantic system: red clearly blocking the lane, green clearly releasing it, neutral cyan for “just passing.”

Deliberate scope

Scope was deliberately constrained: no claims about technical feasibility or edge‑case handling, to keep focus on interpretation and trust.

Test

Role play in ambiguous scenarios

Purpose

Evaluate whether the prototype actually communicates intent as intended, and how it affects perceived safety and confidence compared to a vehicle without eHMI.

Approach

Designed two core scenarios:

1. A vehicle approaches, decelerates, stops, and yields.

2. A vehicle approaches, slightly decelerates, and passes without yielding.

Ran each scenario twice allowing direct comparison: once with the eHMI active, once with a “conventional” vehicle.

Used role play with the same participants from the co‑design workshop, placing them in the pedestrians shoes at a crossing.

Presented sequences from a pedestrian viewpoint and guided a semi‑structured narrative where pairs discussed aloud when they would cross.

Captured both decisions and reasoning, then analyzed recordings through qualitative content analysis (Udo Kuckartz approach) in MAXQDA.

Role‑play setup

Pairs of participants evaluated scenarios from a pedestrian’s viewpoint, discussing aloud whether and when they would cross.

Prototype scenario

Animation of the tested scenario: the vehicle approaches, decelerates, and either yields or passes, with and without the eHMI signals.

Analysis Method

Qualitative content analysis (Kuckartz) in MAXQDA to code decisions, reasoning, and expressed (un)certainty.

Key Findings

Feeling acknowledged

Participants reported feeling more acknowledged and “seen” when the eHMI confirmed yielding, even though the physical vehicle behavior was identical.

Where ambiguity remains

Ambiguity surfaced when signals were too subtle or too complex, directly informing where to iterate.

Higher mental effort without eHMI

In no‑eHMI conditions, participants hesitated longer and verbalized more doubt, needing more discussion to negotiate the vehicle’s intent. Indicating a higher mental strain to decide if it was safe to cross.

Deliver

Iteration, Validation & Design Criteria

Purpose

Close the loop responsibly: refine the eHMI based on qualitative feedback, validate directionally, and distill reusable design criteria instead of a “finished product.”

Approach

Simplified overly expressive visual behaviors to reduce interpretive effort and avoid “light show” aesthetics.

Tightened temporal alignment between light behavior and deceleration/stopping to reinforce causal understanding.

Clarified visual distinctions between yielding, blocking, and neutral states to avoid ambiguous in‑between patterns.

Ran a structured survey using the refined prototype to compare perceived safety, clarity, and confidence. The survey reused the core scenarios and participant group from the earlier role play to capture directional change rather than fresh, naïve reactions.

Refined prototyp

A/B comparison used in the survey

Identical scenarios with and without the refined eHMI signals, shown to the same participant group to capture directional changes in clarity and confidence.

Key Results

Directional improvement

In the follow‑up survey, participants directionally reported higher clarity of intent and greater confidence to cross when interacting with the refined eHMI prototype compared to no eHMI.

Pragmatic design criteria

The work converged into a set of pragmatic design criteria, for example:

An eHMI should amplify vehicle movement, not replace it.

It should visually reinforce action, not explain internal system state.

It should leverage existing semantic colors:

red to block the lane

green to release it

driving may be expressed in a distinct, non‑semantic color.

Adaptable across brands

These criteria remain agnostic to brand and implementation and can be adapted by different manufacturers and research teams.

Learnings

Design for meaning

Designing for safety‑critical contexts means designing for meaning, not for interfaces. The work starts from how people interpret behavior, not from what we can display.

Movement as language

Movement is already a rich language. trying to replace it with icons or text might create a second, conflicting language instead of clarifying the first.

Prototypes as probes

In this project, the eHMI prototype functioned as a hypothesis about meaning, not a pre‑product. Treating it that way made it easier to ask the right questions instead of defending a solution.

Semantic over novel

Semantic consistency (e.g., red blocks, green releases) felt more reliable to participants than visual novelty when they had to decide quickly whether to cross.

Open then converge

Intentionally opening the problem space wide before converging prevented premature solutioning and forced me to make my assumptions explicit.

Reduce, then refine

This project reinforced my bias toward removing signals rather than adding them until what remains is unambiguous, even if that still needs further testing under real‑world pressure.

What I Carry Forward

In complex systems, I now explicitly look for existing meanings, “languages”, and ask how to improve a system by clarifying and amplifying them before introducing any novelty.

More works

Let’s talk.

Prefer a quick conversation? We can align on expectations, product context, and whether there is a good fit.

15 minutes